The Scaling Paradox

Companies like Google, X (Twitter), Spotify, and Atlassian have created very popular and brilliant product lines, and often embody Agile, DevOps, and software architecture best practices. Yet, the quality of their products has witnessed noticeable erosion. It’s called the Scaling Paradox, and a large degree of it is an unavoidable byproduct of scale and success, but some organizations handle it much better than others.

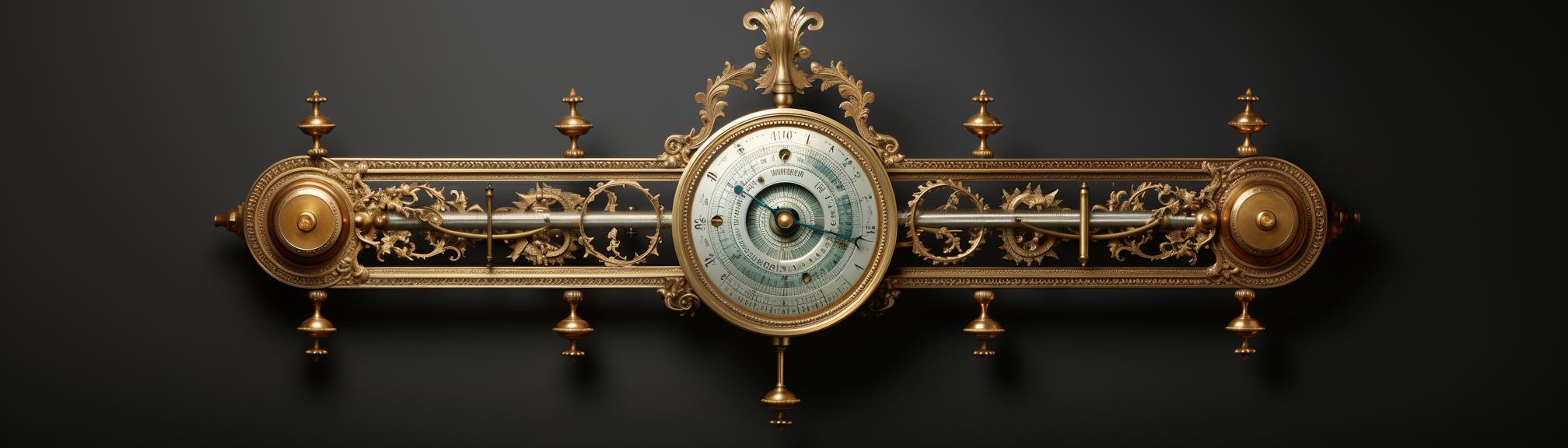

In this post, I’ll discuss my barometer for software quality and briefly analyze companies that are failing and others that are succeeding at building good software.

The Quality Barometer

The Software Quality Barometer is fundamentally anchored in a feedback-driven approach, with customer sentiment as its core indicator. At its core, this barometer is not just a set of quantitative metrics; it’s a dynamic, adaptive framework that prioritizes understanding user needs and frustrations.

From a user experience standpoint, the barometer mandates a clean, simple, and effective interface. This ensures that users are not lost in the labyrinth of features and find value every step of the way. Consistency and predictability are key, honoring the Principle of Least Astonishment (POLA) to deliver an experience that meets user expectations, rather than bewildering them with unexpected behaviors or outcomes.

On an ethical front, the barometer calls for a system that evolves to increase its value to the user. The focus is on user-centric improvements that enrich the experience, rather than manipulative tactics that merely boost the business metrics.

In essence, the quality barometer serves as a robust guide for creating and sustaining software that is not just functional and reliable but also ethically responsible and deeply aligned with its users’ actual needs and sentiments. It acts as both a lens and a mirror: a lens to examine the product through the eyes of the customer, and a mirror reflecting whether the system is evolving in a direction that enriches the user, not just the business.

Dissecting the Giants

Modern tech giants are organizations that not only delivered compelling products, services, and experiences but also managed to scale those experiences to an incredible degree successfully. However, this scale often comes at a cost.

Google’s focus on ad-serving products leaves other areas neglected, resulting in frequent product sunsetting and eroding trust. Persistent bugs remain unaddressed, and their home assistant devices have declined in reliability and usefulness over time as they added more capabilities and dialects.

Spotify’s backend is robust, never experiencing downtime. However, their user interface has grown increasingly confusing, and their group sessions are bug-ridden, leading to an inconsistent user experience.

X, formerly Twitter, became less reliable after Elon Musk purchased it and experimented with various features that were later reverted. The platform is now trying to expand into a ‘do-it-all’ service, adding to its unreliability.

Atlassian’s products have seen little beneficial change over the years, resulting in a stale and confusing interface. They have also split functionalities into different products, complicating the user experience further. The introduction of ‘team vs company’ managed projects adds limitations and doesn’t simplify the UI as intended.

In the strategy video game Stellaris, players encounter “fallen empires,” civilizations that once ruled vast portions of the galaxy but have since become stagnant and isolated. Though still technologically and militarily potent, they are reluctant to adapt and expand. The tech giants discussed earlier bear striking resemblances to these fallen empires: powerful but increasingly vulnerable to disruption.

Every so often, Stellaris introduces a “galactic crisis,” shaking up the existing balance and compelling the fallen empires to awaken and act. In the realm of technology, the closest disruptor we’ve witnessed is the emergence of Large Language Models (LLMs). These AI-driven platforms have the potential to upend established norms in various sectors. As we anticipate their broader impact, it’s worth watching whether they will serve as the catalyst that reinvigorates these “fallen empires” of the tech world.

The Power of Listening

The tech companies that have managed to maintain or even improve the quality of their offerings have one thing in common: an effective customer feedback loop.

Agilebits, the company behind 1Password, has built its reputation on an aggressive problem-solving approach. They actively monitor their support forums and respond to issues rapidly. The engagement goes beyond simple acknowledgment; they troubleshoot and fix the issues quickly. This proactive stance makes users feel heard and enhances the quality of their product simultaneously.

JetBrains takes a different yet equally effective approach by focusing on segmenting their users. Their suite of products, although built on a similar platform, are designed with particular user experiences in mind. They understand that a tailored UX isn’t just an accessory and core feature that can delight users and sustain long-term engagement.

Basecamp has built its business model around questioning the conventional wisdom that pervades the tech industry. From moving back to on-premise data centers to critiquing the disruptive nature of real-time communication platforms, they adopt what many consider contrarian stances. Doing so gives them a deeper understanding of the often overlooked subtleties that impact user productivity and satisfaction.

The impact of a robust customer feedback loop is multi-dimensional. Firstly, it builds trust between the organization and its user base. Users are more likely to stick with a product they feel has their best interests at heart. Secondly, it becomes an engine for sustained quality. Issues are identified and fixed; user suggestions often evolve into feature enhancements. Thirdly, it is an early warning system for potential systemic issues, enabling companies to pivot or refine their strategies before hitting a snag.

Companies like Agilebits, JetBrains, and Basecamp demonstrate that even as organizations grow in scale and complexity, a focus on customer-centricity can be their compass.

Root Causes

Scaling is a double-edged sword. On the one side, you’ve got exponential user growth and revenue (I like money); on the other, the organization is now facing unprecedented challenges beyond the technical:

Resource Allocation

One of the most significant challenges when scaling is judicious resource allocation. As organizations scale, so does the complexity of their systems and the resources needed to maintain them. The complexity exponentially increases as the organization adds more features, diversifies products, or merges other platforms into its ecosystem. Get this wrong, and you’re suddenly firefighting on multiple fronts, detracting focus from quality and user experience.

Feedback Loop Breakdown

As the scale grows, maintaining an effective customer feedback loop becomes tougher. The distance between the user base and the developers often grows, diluting the impact of user input on the system. When this happens, quality doesn’t just stagnate—it deteriorates.

Many organizations don’t notice this breakdown until it’s too late. Others will attempt to rectify it through data augmentation - collecting large amounts of quantitative data. This adds much value and goes a long way toward being in tune with users, but it only goes so far. Quantitative data-driven insights should complement qualitative data: customer interviews, focus groups, domain expert opinions, etc.

Lack of Focus

Amid rapid scaling, many organizations lose sight of what made them successful in the first place. They try to become everything to everyone, resulting in a diluted product that doesn’t excel at anything. It’s a classic case of losing the forest for the trees.

Google is notorious for its corporate ADHD. They are constantly rolling out new products only to sunset them a few years later when they realize they aren’t able to profit from them. While having the backbone to shut down a project that’s bleeding money is admirable, it also shows poor strategic planning and a lack of commitment. That lack of commitment, in turn, erodes the public’s trust in one’s future commitments.

Technical Debt

Scaling often comes with rushed decisions. Quick hacks to get a feature live can accumulate as technical debt, and like any debt, the interest compounds. As the system scales, so does the burden of this debt, affecting system stability and future development speed.

As the debt accumulates, your development team may spend more time on bug fixes and less on feature development. Over time, this can erode competitive advantage, reduce team morale, and even risk the stability and security of your product.

Organizations that start facing a large influx of technical debt should take great care to track and document it, allocate time to address it, invest in training to empower their team with the tools they need to repair and prevent future tech debt and ensure the executive leadership understands the business risks associated with it. Technical debt shouldn’t just be the concern of the engineering department; it needs to be understood and addressed at every level of the organization.

Quality is a Journey

Quality isn’t a destination but a journey. The barometer we discussed is not static; it should evolve with your product, team, and the broader ecosystem. Your software projects’ quality barometer must evolve with new updates, features, and market needs. Continual monitoring and periodic assessments are key to making informed decisions, especially when you face trade-offs between speed and quality.

In addition, remember that quality is everyone’s job. Extend it beyond engineering to become an organization-wide key performance indicator, enriching your quality framework with insights from various departments.

Comments