AI Artists Have No Idea What a Head Is, or How Arms or Hands Work

As marvelous as some of the images generated by the current crop of Stable Diffusion Generative AI models may be, there are subjects that it cannot render well. In this post, I will be visiting some of the nightmare fuel that Stable Diffusion can inadvertently produce. Specifically, I will be examining its inability to comprehend and generate bodies, heads, and hands. These are things that still need authorship by a human artist (at least for now).

I’ve been exploring the liminal dreamscape realm of Generative AI models, checking their fit, observing the war. Some of the things I’ve found have been deeply moving, some inspiring, some terrifying or disturbing. This is part of a series about my journey and the best practices (and anti-practices) I find for these new tools.

I asked Midjourney for a yellow snake, and it gave me this:

In the generative model for Stable Diffusion, the algorithms at play are determining the pixels that live next door in a probabilistic way, having been trained on an immense dataset of images classified (through a phase of human auditing) as “beautiful”.

But Midjourney doesn’t know what a head is, and the most likely pixels to be painted next to any part of a snake’s body is… more snake body. And so, we get a nightmarish python donut, or random squiggles of pythonic flesh that look more like a disassembled octopus.

Similarly, when I asked for an image of two men fighting, it produced this:

The AI Artist has no idea where one arm should end and the other begin. Where we have a reasonable expectation that an artist should know where the flying and punching limbs start and stop, it instead joins the two combatants together and has no way of knowing that it has gone astray or that the result will look strange to human eyes.

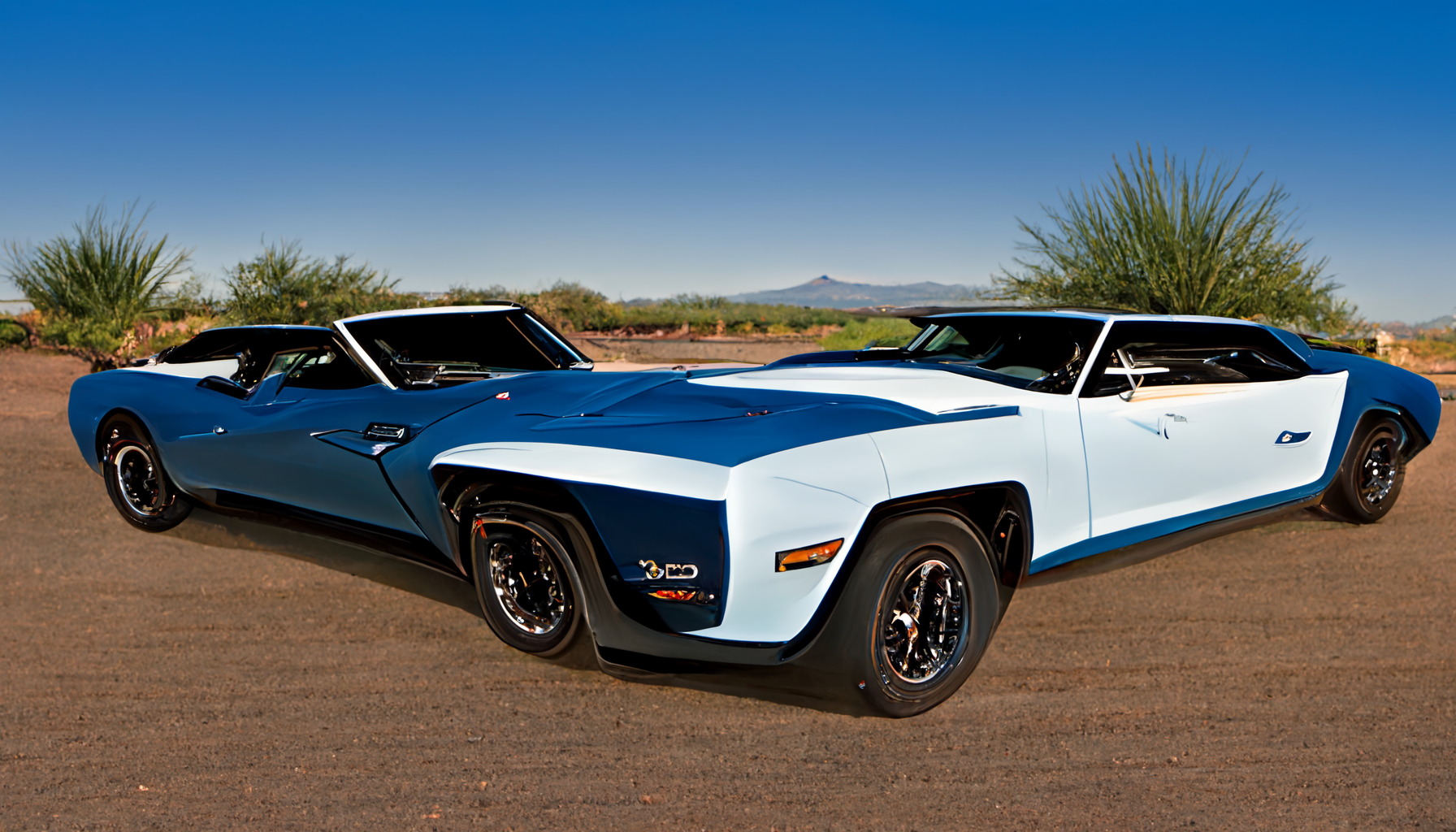

These kinds of errors are not solely restricted to the domain of body horror, but can impact objects as well. The AI artist cannot tell how a car should really look. When asked for a Camaro in a desert, it gave me this:

The car body has grown in several directions, and the AI Artist has no concept of rightness or wrongness to apply to the generated art. It simply paints in the direction, and with the pixels, it finds earlier examples of in its gigantic database of images. When all that data, and all those images are overlaid upon each other, these kind of smooshes are inevitable (and common).

These are perfect illustrations of the way the Stable Diffusion algorithms mindlessly colonize the substrate of the image canvas, with no concept of heads, or arms, or aerodynamic drag.

The generation of the image by the Generative AI model is almost bacterial, a fungal growth moving outwards from its starting point. The AI artist has no grasp on how the entire picture will end up looking, and only finds out at the same time you do, once its pixels have taken over the entire screen.

Another problem this causes is the inability to decide which direction fingers should point, and how many of them there should be. In fact, since it has no idea what fingers are, it is typically a spaghetti mess of digits (when they even exist).

The earlier prompt for two men fighting also generated a result that looked like this:

Even when the Generative AI model can produce an otherwise complicated and haunting image, it doesn’t know how to make the hands look right. When asked for a futuristic cyberpunk girl, it produces this:

Fingers are hard for humans to draw, too. They are delicate, and jointed, and incredibly important to our species. The AI Artist, with its millions upon millions of drawings of fingers, can splash them onto a canvas, but it has no idea how they should be oriented.

Of course, there is a way to correct these wayward images - Dall-e 2, for example, has the ability to “inpaint”, so it is possible to erase the squiggly spiderhands and then re-generate those areas with more specific prompts, and this tends to give better results (it is easier for the AI artist to generate “hands” as a discrete generation, from its database of images), which allows you to duct tape the image into some semblance of overall coherency. But Midjourney itself has no inpainting tool, so the generation of sane-seeming images currently requires multiple passes with two different tools (Midjourney, then Dall-E 2).

Obviously, future tools and iterations of these same tools are likely to include multiple automatic stages of generation, which will build in the concepts that are missing so far. They will automate these (relatively) “manual” steps. The results they will produce, especially as the competition in this space heats up, will be far superior to what is being generated now.

But until then, these tools clearly have limitations. Not only are heads and hands a problem, but these Stable Diffusion Generative AI models have huge difficulty with faces and eyes as well. I will cover these in the next post.

And even in cases where the glitching isn’t immediately obvious, there are similar issues being manifested at an ideological layer which are far more insidious (and perhaps even dangerous). Look forward to further coverage of the limitations of these intriguing and powerful new tools, as I continue to investigate their weaknesses and determine where and when it is best practice to deploy the images they produce.

Comments